Seeing Google cars navigating our streets and highways, with their arrays of spinning sensors and antennas bristling from their roofs gives us the impression that the technology involved is complex and expensive. Until recently, they were, with Wired reporting that early Google cars had multiple $80,000 LIDAR systems, and entrants in DARPA (Defense Advanced Research Projects Agency) challenges often sported over a quarter-million in esoteric devices that could, on occasion, spot the Holy Spirit in the vicinity. (Your editor made that last part up.)

Sebastian Thrun sitting on 2005 DARPA Challenge winner. Sebastian heads up several Google developments, including Google Cars

Mike Ramsey, writing for the Wall Street Journal suggests those prices may be going down – way down. “A Silicon Valley startup says it has solved several of the issues that might plague the introduction of autonomous vehicles — primarily the cost of the equipment.

“Quanergy Systems Inc., of Sunnyvale, Calif., says it will offer a light detection and ranging sensor — or LIDAR — next year that costs only $250 and is the size of a credit card. In 2018, it is promising a postage stamp-sized sensor for $100 or less. It’s all made possible by a solid-state laser system, says Chief Executive Louay Eldada, whose PhD at Columbia University is the basis of the technology.”

Even today, Ford and others testing self-driving systems use a series of whirring, somewhat myopic devices to find their way – a bit like a blind-folded person getting a tug from a near-sighted guide dog on one hand, an audible cue from a sensor on their cane, and myriad other clues from dozens of other individually-limited units – each squinting out into a series of obstacles and hazards.

Besides the expense of most LIDAR units, the cheapest running around $8,000, they don’t work well in rain or snow. People probably won’t be charmed by their cars looking like DARPA test vehicles. LIDAR measures objects a few hundred feet away, which would make it limited for in-flight use, where approaching objects are usually further away than in a typical driving scenario, and moving much quicker. The video below shows the 2007 DARPA Urban Challenge, with a collision between two competitors and interference from the Jumbotron preventing one entrant from even starting.

Consider the difficulties overcome in the last eight years to reduce the size of sensors and tuck them into the vehicles. Mom probably won’t want to pull up at Ridgemont High with the roof rack looking like something out of a bad sci-fi film. The kids would love it for all the wrong reasons. On an airplane, the weight and aerodynamic penalties would make this a non-starter.

Quanergy, though, has a little box measuring 3.5 inches by 2.4 inches by 2.4 inches, easily integrated into the contours of the vehicle. It senses things as close as 10 centimeters (4 inches) and as far away as 150 meters (almost 500 feet). Coupled with other sensors and cameras (especially useful for resolving distant objects), and fed through a miniaturized central control unit, the S3 could be a core unit in a very aware vehicle.

S3 LIDAR is black box that prevents accidents, rather than recording them

Quanergy has signed supply or development agreements with Daimler AG, Hyundai Motor Co., Kia Motors Corp. and the Nissan-Renault Alliance, among others.

IEEE Spectrum explains the operation of the LIDARS we now on top of Google cars, and the less obtrusive item made by Quanergy.

“LIDAR systems work by firing laser pulses out into the world and then watching to see if the light reflects off of something. By starting a timer when the pulse goes out and then stopping the timer when the sensor sees a reflection, the LIDAR can do some math to figure out how far away the source of the reflection is. And by keeping careful track of where it’s pointing the laser, the LIDAR gets all of the data that it needs to place the point in 3D space.

“In order to build up a complete view of the world, a LIDAR needs to send out laser pulses all over the place. The way to do it is to have one laser and one sensor and them move them both around a whole bunch, usually by scanning the whole LIDAR unit up and down or spinning it in a circle or both. You’ve probably seen these things whirling around on the top of autonomous cars. And they work fine, but they’ve got some problems: namely, they’re kind of big, they’re stupendously expensive, and because they have to be moving all the time, they’re not really reliable enough for consumer use.

Screen capture of S3 operation. Limited in horizontal and vertical coverage, it would take multiple arrays of S3s to give 360 degree coverage in all planes

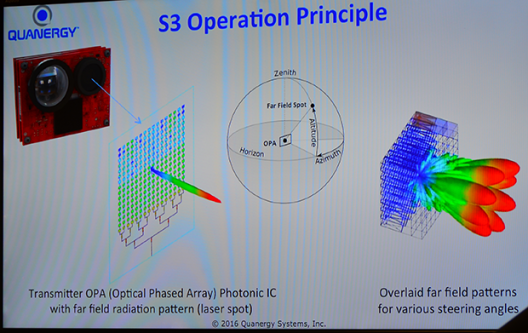

“This is where Quanergy comes in: its solid-state LIDAR has no moving parts. Zero. Not even micromirrors or anything like that. Instead, Quanergy’s LIDAR uses an optical phased array as a transmitter, which can steer pulses of light by shifting the phase of a laser pulse as it’s projected through the array:

“Each pulse is sent out in about a microsecond, yielding about a million points of data per second. And because it’s all solid-state electronics, you can steer each pulse completely independently, sending out one pulse in one direction and another pulse in a completely different direction just one microsecond later. Essentially, you can think of Quanergy’s chip as acting like a conventional glass lens, except that it’s a lens that you can reshape into any shape you want every single microsecond.”

As these things are reduced to computer chip size and become available as consumer products, we’ll doubtless see their application in conventional light aircraft for unconventional uses.